Yao, C. & Fricker, P. (2024). Neural Network-Driven 3D Generation of Urban Trees: Advancing carbon mitigation simulation through detailed tree modeling from point cloud data, Proceedings of eCAADe, Vol. 1, pp. 605-614.

For more information please contact: chaowen.yao@aalto.fi

Supervisor: pia.fricker@aalto.fi

2024

Neural Network-Driven 3D Generation of Urban Trees

Advancing Carbon Mitigation Simulation through Detailed Tree Modeling from Point Cloud Data

Abstract

Urban digital twins are essential for climate-responsive urban planning but often fail to accurately represent trees, relying instead on oversimplified models that inadequately capture their environmental impact. Traditional methods for tree modeling, notably skeletonization, are both iterative and labor-intensive, leading to inefficiencies in environmental simulation accuracy. Addressing this gap, our study introduces a novel approach using a PointNet-based Convolutional Neural Network to generate precise 3D tree models from mobile laser-scanned point clouds, significantly enhancing simulations for carbon mitigation efforts. Our method, tested in Helsinki’s Jätkäsaari area, leverages pre-defined skeleton data to train the neural network, streamlining the extraction of movement direction and distance, thus bypassing traditional skeletonization’s iterative nature. We further refine our model’s accuracy and robustness by incorporating point clouds of varying densities and tailoring our approach to account for the morphological diversity of specific tree species. This specificity enables our models to more closely mirror real-world trees, making them invaluable for dynamic environmental modeling within urban digital twins. Moreover, our models support integration with the L-system, a prominent plant growth simulation algorithm, showcasing the potential of advanced neural networks to revolutionize computational architecture and foster precise, sustainable urban environmental simulations.

Aim

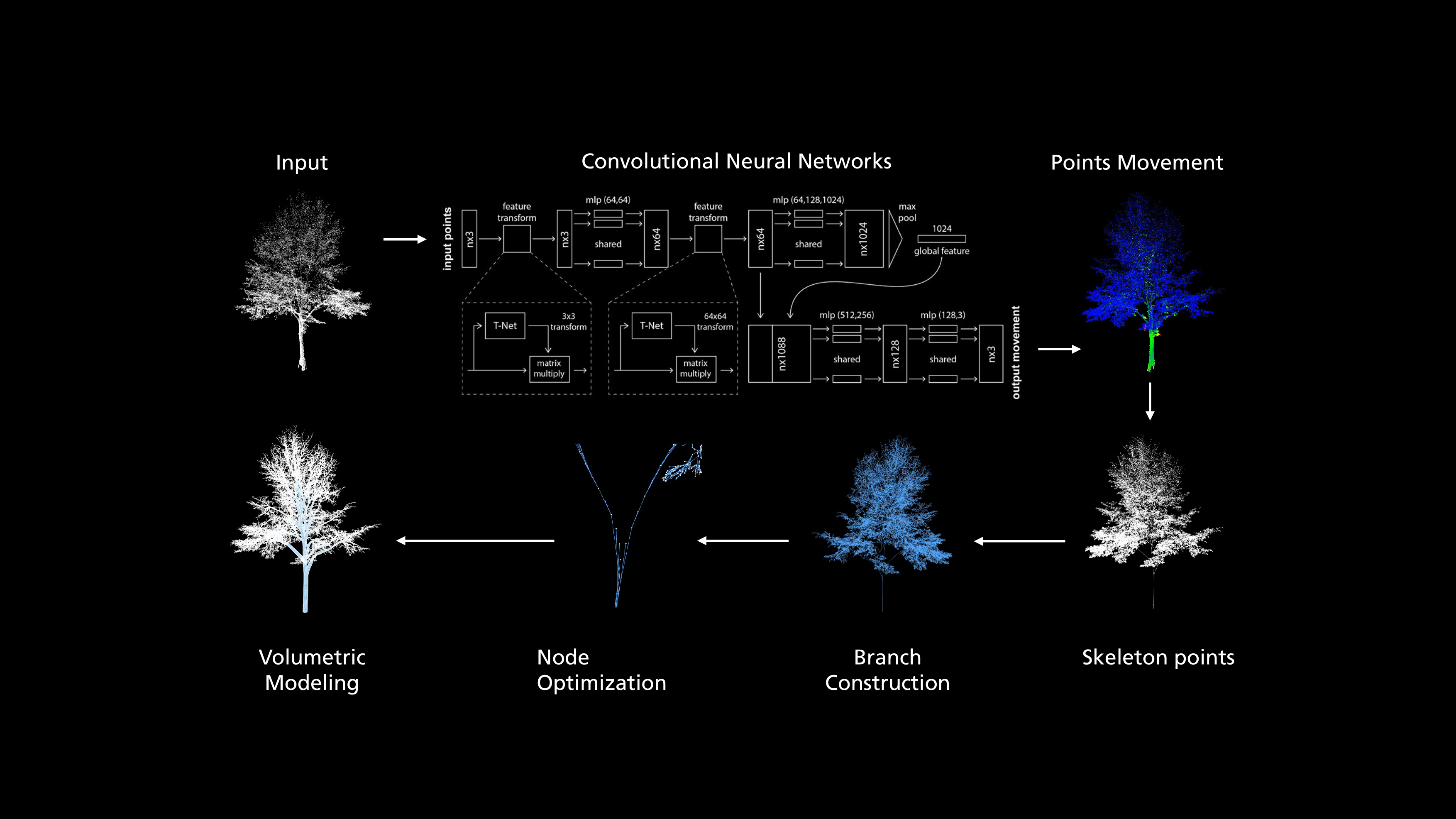

Our research addresses this gap by introducing a PointNet-based Convolutional Neural Network to generate accurate 3D models of urban trees from point clouds. This innovation transcends traditional tree modeling methods, which are both time-consuming and inadequate in portraying the complex morphological traits essential for precise environmental simulations. By advancing tree model precision within urban digital twins, our work not only enhances the simulation of urban climates but also illustrates the potential of integrating advanced neural networks into computational architecture for sustainable urban planning. This initiative is poised to enrich the functionality of urban digital twins, enabling more accurate and dynamic environmental modeling, essential for addressing the pressing challenges of urban sustainability and resilience

Method

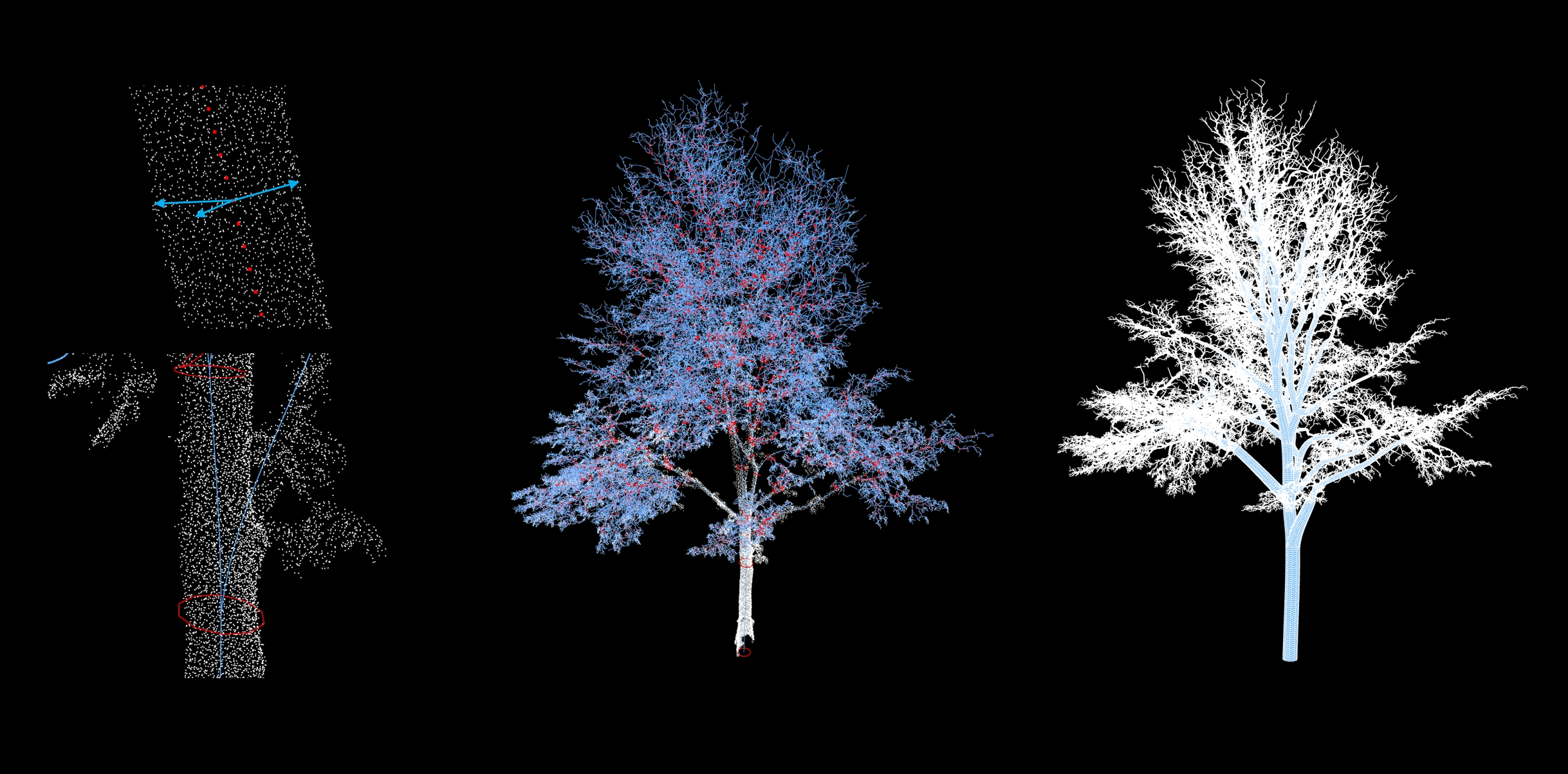

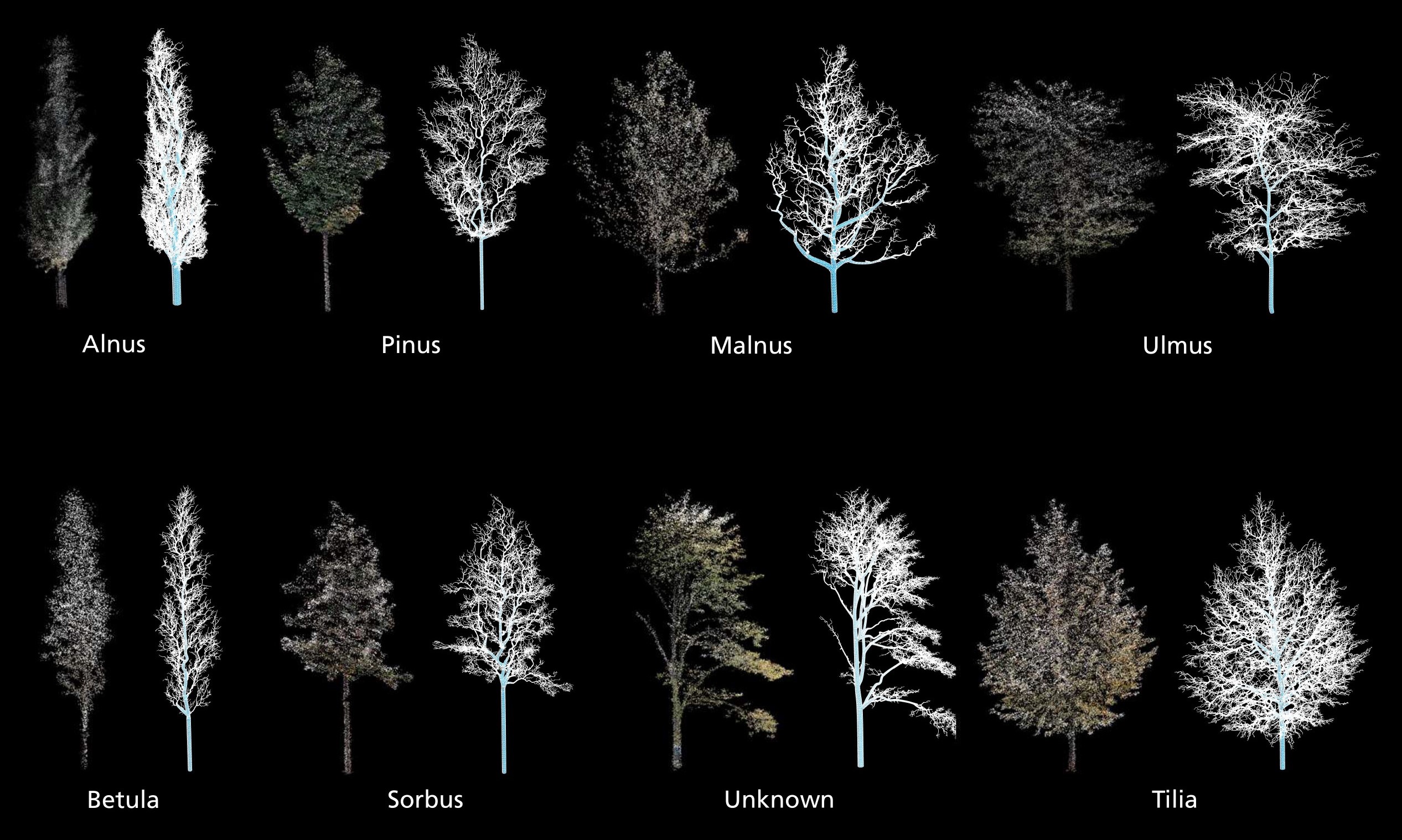

We initiated a comprehensive labeling process within the pilot area, identifying around 1000 trees. These were systematically divided into training, validation, and test datasets, with proportions set at 0.8, and 0.2, respectively. The labeled point clouds included essential data such as spatial coordinates, directional movement, and radial information, as visually represented in Figure 2. The datasets underwent species-specific grouping, with each point P_i in the cloud annotated with both its spatial coordinates (x,y,z) and a species identifier. To enhance computational efficiency, we standardized the point cloud by centering each point around the mean (P=\ P_I-P_{mean}). Additionally,we use padding and subsampling to limit the point cloud with the size of 40,000 points each file. For data augmentation, we produced n+1 subsamples if the input point cloud has n * 10000 + 40000 points. We trained a neural network to capture the structure of trees based on the PointNet architecture, which was widely applied for point cloud classification and segmentation (Guo et al., 2021). This architecture was selected due to its reduced computational requirements compared to traditional Convolutional Neural Networks (CNNs) and its robustness in point cloud processing, especially for incomplete point cloud scanning that is common in real mobile laser scanning dataset (Qi et al., 2017). The model takes points as input, using input and feature transformation firstly, and then aggregates features by max pooling. Batchnorm is used for all layers with ReLU. We modified the output dimensions to predict the centralization movement of each point. The model finally concatenates global and local features and outputs predicted point movement where dropout layers are used to prevent overfitting.

The neural network’s predictions of directional vectors \vec{D_i} and radial distances R_i facilitate spatial adjustments to accurately approximate the medial axis of tree points. A Fixed Radius Nearest Neighbors (FRNN)-based algorithm constructs a neighborhood graph for each point, utilizing R_i to identify adjacent points N_i. If N_i <5, a K-Nearest Neighbors (KNN) algorithm is used to ensure a minimum adjacency count of five (N_i =5).

The construction of branches employs Dijkstra’s Shortest Path (DSP) algorithm, based on the premise that each point in the point cloud represents a potential branch or leaf. With the lowest point set as the root node, DSP identifies the shortest paths to all other nodes in the graph, generating an optimized path tree. Initially containing superfluous branches as shown in Figure 2, the tree is refined by adjusting the KNN threshold, selectively pruning nodes based on their distance from parent nodes. The final step involves assigning radial values at the ends of branches and using R_i to model cylinders, completing the detailed representation of tree branches.

Validation

We used the mean squared error (MSE=\sum_{i=1}^{n}{(y_i-y_i^p)}^2) as the loss to evaluate the model training of point movement since it is difficult to judge via correct or not. We simultaneously calculated the mean absolute error(MAE=\sum_{i=1}^{n}{|y_i-y_i^p|\ }) for each epoch in order to avoid anomalies in the training set being given a larger weight. We adopted 0.001 as the learning rate through comparison experiments, and the evaluation is shown in Figure 3. We stipulate that training is stopped when the training loss is not improved in 10 epochs. The statistics of the model training where the final training Loss is 0.862, MAE is 1.389, the validation loss is 0.096 and MAE is 0.234.

Discussion

The refinement of the neural network model to enhance its accuracy and efficiency remains a priority. There is an underfitting in the model due to the large number of leaf points which affects the capture of the tree structure. At the same time, the included ground and infrastructure points make modeled trunks shift or with incorrect radius. Future work will focus on optimizing the network architecture and robustness to noise and interference points to accommodate the complex variability of urban tree structures more effectively. Due to color deficiencies in the point cloud capture process, we will optimize the process to discriminate tree branches from leaves before training.

Expanding the model’s capability to include a wider range of tree species is essential. Such expansion will enable our tool to offer comprehensive solutions for urban forestry management and planning, encompassing a greater diversity of urban green spaces. Automating the removal of non-tree elements and the labeling of real trees within point clouds is crucial for scaling the methodology to larger urban areas. This automation aims to streamline data preparation, reducing the labor and time investment required. Exploring the integration of our tree modeling tool with existing urban planning and environmental management platforms will facilitate informed decision-making regarding urban green space design and biodiversity conservation.